Being first to market is great but being the first to respond to user feedback in the market—that’s the kingmaker. New advances in generative AI hold incredible promise for enterprises to be nimbler and more responsive. However, this is uncharted territory. Few enterprises have a roadmap for incorporating generative AI into their workflows.

This guide can help. After adopting generative AI into our own organization and helping enterprises do the same, we’ve created an overview of the best practices for making the most of these powerful new technologies.

Supercharging The Traditional Product Workflow with Generative AI

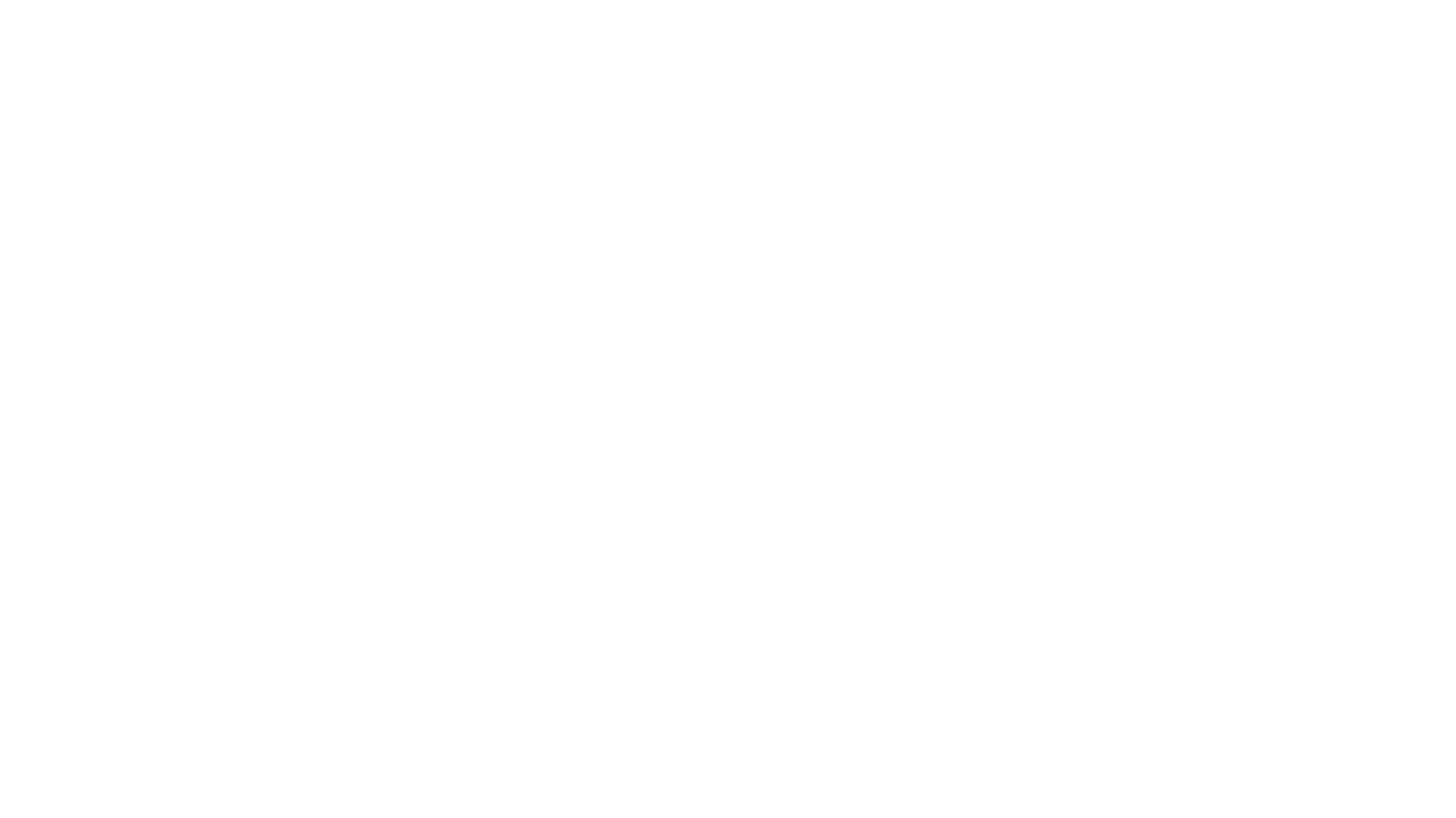

The traditional digital product engineering development cycle requires much time and effort between a great idea and its release, and the lag between customer need and when the solution gets into their hands is a vulnerable time because the market can move, the problem changes, and people may no longer want the product.

Generative AI can short-circuit that lag. Smaller teams can produce similar results faster with a sizable reduction in overhead. This is not to necessarily encourage you to cut your team —but imagine your people doing more with less.

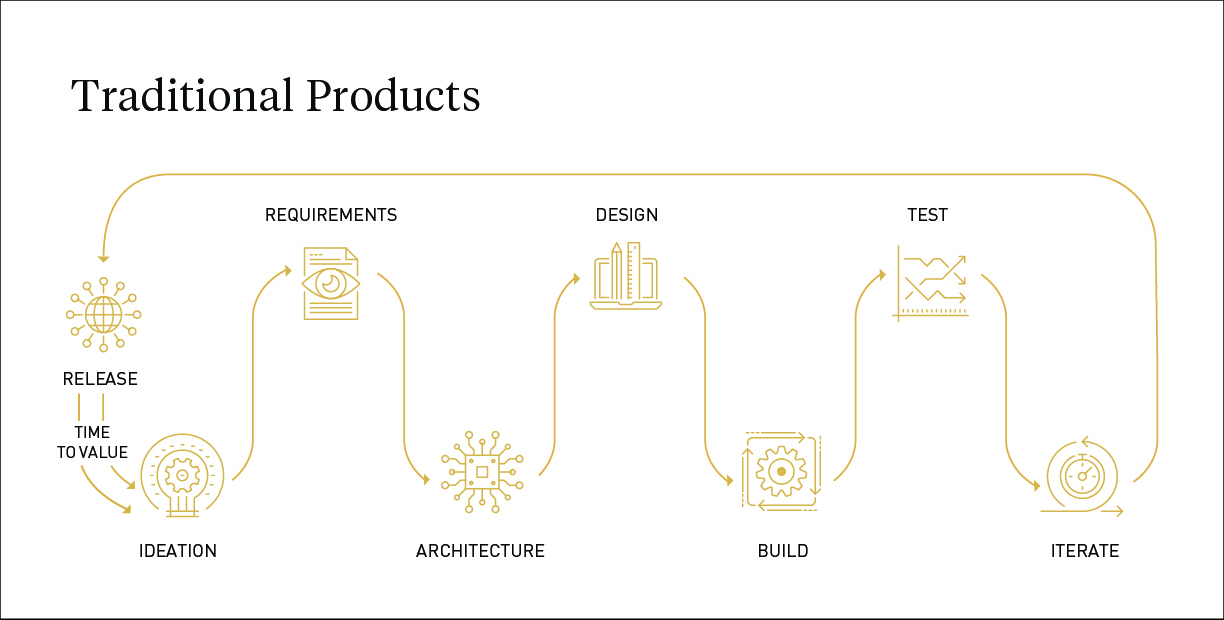

Dialexa’s Pilot with ChatGPT

To test our own enterprise readiness for generative AI adoption, Dialexa conducted a pilot program, which captured use cases and the experience of pilot participants using ChatGPT. We were concerned our people would spend more time on the tools than they saved, but largely, ChatGPT was an incredible efficiency booster. The results demonstrated:

How to Harness Generative AI for Product Development

Generative AI has made a sizable impact on our efficiency as a company—and we expect those results to only compound as AI capabilities grow. Your enterprise may be wondering what you should be doing today and what investments you should make to similarly leverage the advances of AI.

To answer these questions, let’s break down how generative AI can revolutionize the traditional product development workflow for your enterprise across three essential phases: designing and building, testing and launch, and performance and scale.

The Design & Build Phase

We’re not doom and gloom people proclaiming, “if you don’t adopt generative AI right now, your company will be gone in three years.” However, your team should already be well versed in several commercially available AI capabilities:

- Code Assistance: Your engineering team needs to be using code assistants. Not to wholesale build applications, but to efficiently solve problems.

- Project Management: Project managers should be using generative AI to break down ideas and stories for developers and designers.

- Personas: Strategy and design teams should be using generative AI to create personas, understand users, and create prototypes.

- Testing: Your team should be using even simple tools like ChatGPT to create tests automatically.

These are easy wins. As for what you need to be adopting today, this is where your money should be going towards:

- Code Generation: Using generative AI to take on the roles of specialization for developers that, today, require a long workflow.

- Project Ownership: Leveraging a generative AI’s giant context window to better organize work and create efficiencies.

- User Simulations: Instruct the AI to act as your persona to provide insights and uncover new capabilities.

- Automated QA: Automate tests written by ChatGPT or Bard or other tools and get them into your workflows.

Lastly, as you look forward, here is where your roadmaps should be pointing:

- LLM (Large Language Model) As Product: In a lot of scenarios, generative AI is just doing what you would write code to do already. Pieces of architecture could be replaced altogether with an LLM.

- Product Definition: Allowing LLMs to examine your data and research, validate and ideate options for your product’s future. Through that process, you’ll discover new segments available to you, new capabilities and features. The AI will essentially be the owner and facilitator of how those ideas are realized.

- Hyper-Personalization: Using persona data to deliver customers with hyper-personalized, new experiences.

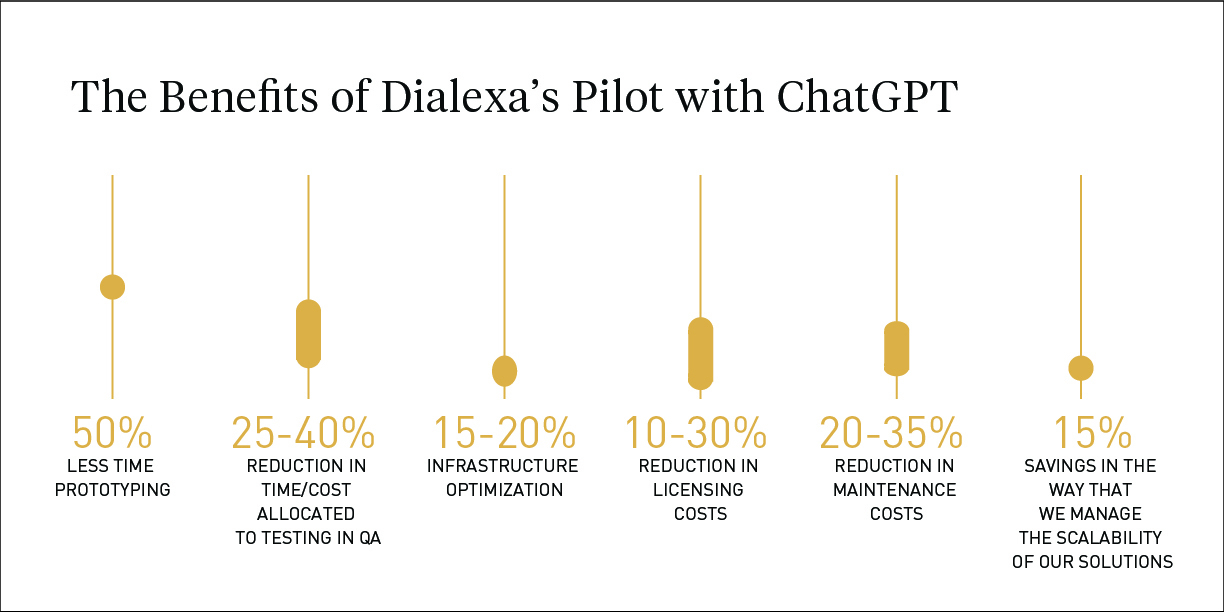

The Testing & Launch Phase

The biggest opportunities to improve testing and launch lie in more effectively addressing the needs of a product in a way that allows people to see the benefit more immediately—and respond to that better.

Where do tools like ChatGPT or LLMs come into play? ChatGPT does a great job generating simple tests, be it on a code level, business level user level, persona level, etc.

Personas

Testing engineers and business analysts spend significant time trying to put themselves into the heads of their customers. Inevitably, they miss things. Generative AI can run through tons of possibilities a single individual would struggle to generate, as well as come up with practical implementations. This doesn’t take responsibility away from the QA person or business analyst, but sets them up to validate the LLM’s output rather than creating it themselves.

Generating Unit Tests

There are infinite ways people can break your products, whether out of ignorance or malice. Unit tests allow you to validate that each piece of our software delivers the output you expect, regardless of input.

These tests are often one of the non-functional requirements for putting a product into production. But, truth be told, just because code has been tested doesn’t mean the test was thorough. Language models allow you to create tests for all possible vulnerabilities in one go. This saves time spent creating tests and ensures they’re comprehensive.

Integrate Robots

Product developers use robots to interact with apps like an actual user would. This is another form of testing, only instead of testing units of the program, they’re imitating human interaction with the product as a whole in a real-world scenario (using the product in a web browser, or app, for example.)

When you design a product, there’s a certain logical way you expect users to interact with your product. Click here. Type information here. But that’s not how people actually interact with products—and sometimes the bizarre things people can do get them stuck. LLMs can quickly identify bugs and ways users will get stuck using applications in real time.

Finetune and Refine

With LLMs capable of operating as developers, testers, and business analysts, you may be thinking great, we’re done.

Don’t stop there. Just the same as you have retros where teams debrief on the effectiveness of their products, when you provide the LLM with feedback, it can provide even deeper insights moving forward, considering where the process can be improved. Generative AI can help you continue to iterate and improve so long as you keep providing it with data and context.

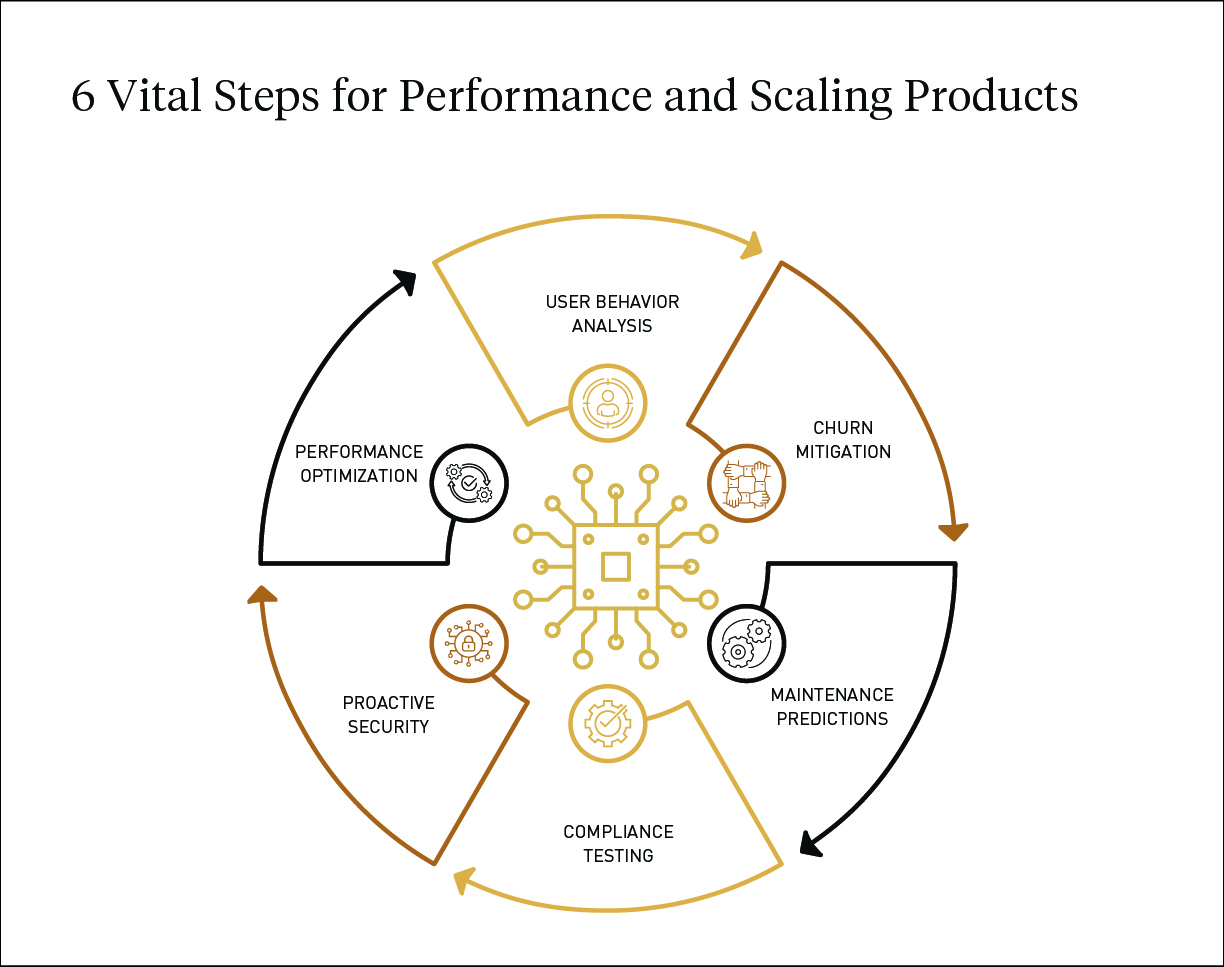

The Performance and Scale Phase

At Dialexa, we call launch day “day zero,” because that is when you start to learn if your assumptions and the way you’ve built our product holds true. After exhaustive user testing scenarios you get very close to solving problems for users, but here’s the thing: the world is not static. The problem is that your product was built to solve changes over time.

Over time, problems will arise. The tool gets more traffic than expected. People use it in a slightly different way than anticipated. Users get frustrated or bored and their loyalty wanes. New security threats or compliance needs pop up. Apps need to not only be maintained, but stay ahead of the curve to proactively address these risks.

How can generative AI help?

Almost all apps already collect huge amounts of data, be it Google Analytics or application insights, and you might have log streaming services behind them to understand where users are breaking the app or falling off.

Generative AI can process all this data and see patterns you didn’t explicitly plan for. For example, one of the most difficult decisions when building a product is determining what to measure. Generative AI negates that choice by allowing you to measure everything and then analyze the data.

Example: Internal Challenges

Figuring out how a user got to an error message is usually complicated. So you log everything and feed that information into a generative AI model, which can recognize patterns, empowering you to act on the issue quickly.

Example: External Challenges

On the flip side, there are plenty of things outside of your product and control: new security threats, compliance requirements, accessibility standards, etc.

Let’s say teams get an alert from their IT department regarding a new security threat. Do you need to drop everything and put out this fire? Or does this threat not affect you—in which case, fighting it will unnecessarily incur a large cost? LLMs can help you quickly evaluate whether a security threat from Node, JavaScript or Microsoft’s backend etc. is actually going to affect your specific user population.

Compliance Testing

A lot of teams struggle with the process of compliance testing as much as they struggle with the technology. Even if you build the technology perfectly and you’re meeting compliance requirements, one rogue user who isn’t listening and doesn’t follow the designated process could be non-compliant. Generative AI allows you to pull user data to address the compliance issue and reinforce it through workflows going forward.

What About Security Concerns?

There are understandable concerns regarding enterprise security when implementing generative AI into an enterprise workflow. However, in my experience, there’s little distinction between the risk of what comes out of ChatGPT and what designers and developers are already going out and repurposing from Google searches today.

Let’s take a complaint about a response from ChatGPT. Put your inquiry into a search engine and you’ll find the same question answered on forums like Stack Overflow. Scroll down and there are tons of answers ranging from an almost perfect solution to “I don’t think you’re actually a programmer.” These are essentially the source material fed to tools like ChatGPT.

Generative AI is not a replacement for humans. Or at least it won’t be for the next 100 years. Today, generative AI augments human behavior. But just like you shouldn’t take the first answer that comes out of Google and paste it into a deck for a client or send it off to a customer, the same best practices apply for tools with LLMs.

Everything that comes out of a Chat GPT or an LLM in those scenarios is a possibly good answer. Generative AI can get you from 0 to 90% of an answer. It’s not perfect and will always require human oversight.

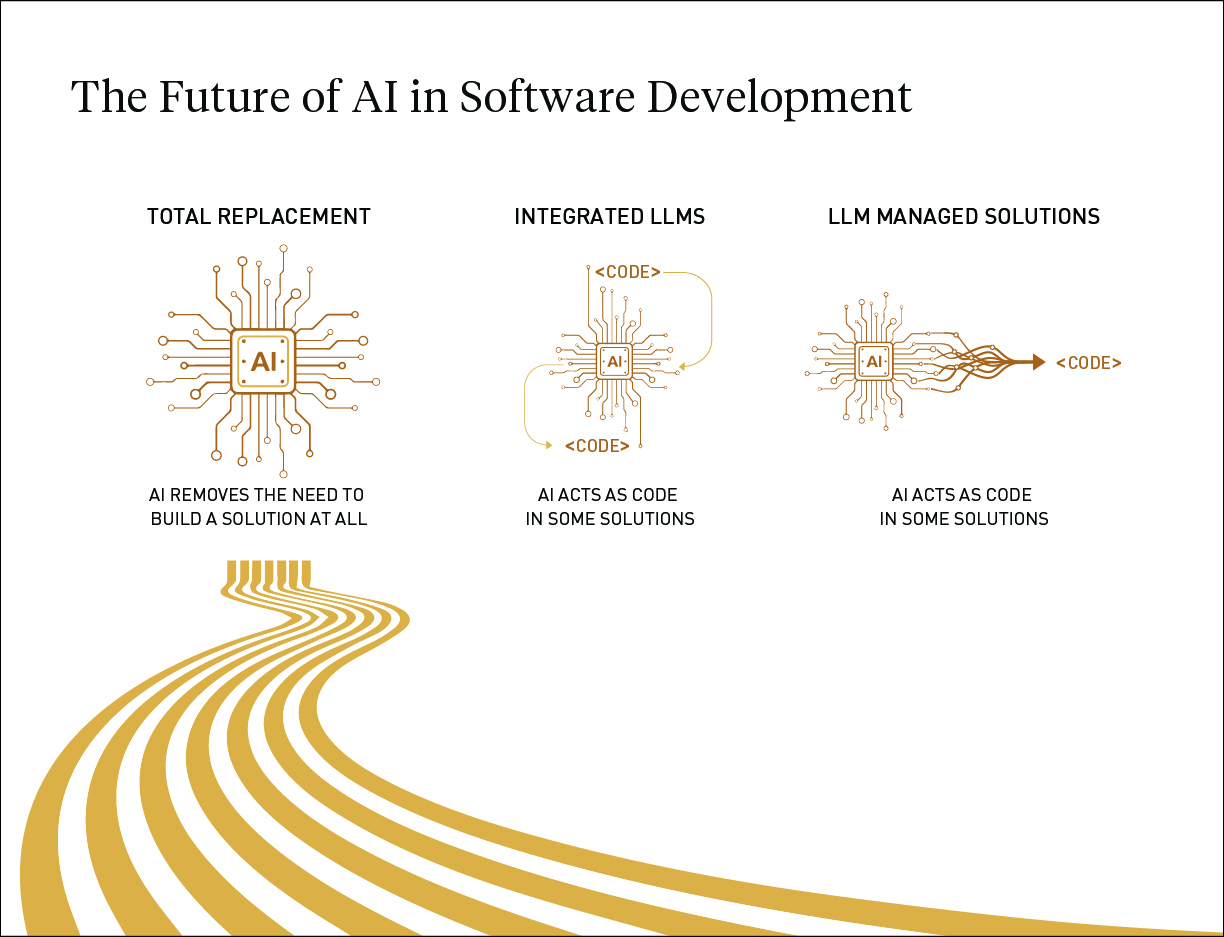

The Future

So what does AI really mean for the future of product engineering? We at Dialexa see three phases coming our way:

- AI removes the need to build a solution. For example, combining budgets across a few different data sources or merging data sources. Or perhaps creating new ticketing capabilities for a support system. We predict upwards of 70% of problems that used to require a new product can now be addressed with generative AI.

- AI acts as code. Imagine a diagram of the architecture of a system. There’s an e-commerce module, a content module, etc. LLMS are very close to being a replacement for an entire nodule. Where we might have had a year-long development timeline broken down across features, entire months can be replaced with an LLM.

- Now, you might counter, there are so many ways you can break LLMs. True. There are also lots of ways to break Excel. And Google. But it’s easy for us to write code around these LLMs to make sure they perform as desired and generate an expected output.

- LLM managed solutions. In the far future, we’re moving into a space where generative AI actually creates solutions for us: generating code, testing it, putting it into production, changing experiences in real time as needed to satisfy its objectives.

Is Your Enterprise Ready for Generative AI Adoption?

We’re already seeing huge effects of generative AI on product development. Stack Overflow saw a huge drop in traffic once ChatGPT was released. Tools will be replaced, but there are also opportunities for us to use generative AI most efficiently.

Your teams are likely adopting generative AI into their workflows already. To reduce risks and maximize the benefits of using these incredible new tools, it’s best to make that adoption intentional.

Use our AI readiness assessment to know what your enterprise needs to address opportunities with generative AI. It’s only 14 questions. At the end, you’ll get a custom report emailed right to you that digs in on a high level to where your organization is on a maturity scale and your opportunities for improvement.

Take the Generative AI Readiness Assessment.

ABOUT THE AUTHOR

Joel Dykstra, Digital Architect, has over 15+ years of hands-on experience leading the architecture, design, build, and management of digital experience platforms. I’m able to see and communicate the big picture in an inspiring way, and I’m skilled at generating new and creative approaches to problems when all solutions seem exhausted.